2026.05

MEDUSA repository is available on GitHub.

Ph.D. in Computer Sciences

University of Wisconsin-Madison, advised by Prof. Suman Banerjee

I am a systems researcher who builds both hardware and software for intelligent edge systems. My work focuses on on-device AI, reinforcement-learning post-training for efficient edge intelligence, and wireless sensing systems.

I build the full stack: custom wearable and embedded hardware, accelerator-aware runtimes, sensing platforms, model adaptation pipelines, and post-training methods that make AI run under real-world compute, memory, energy, and privacy constraints. My work has appeared in MobiCom, ICLR, NSDI, and SenSys.

My current Re-Mind project explores a privacy-preserving on-device cognitive assistant with real-world episodic memory for daily-life accessibility and individualized support. See the Re-Mind Slides.

Recent Momentum

2026.05

MEDUSA repository is available on GitHub.

2026.03

CRANE is now open-sourced for direct Apple Neural Engine inference without Core ML.

2026.01

Our work on efficient multimodal inference for battery-powered small devices was accepted to ICLR 2026.

2025.06

Our work on scalable biometric sensing in the wild through distributed MIMO radars was accepted to MobiCom 2025.

2025.01

PalmBench, our benchmark of compressed large language models on mobile platforms, was accepted to ICLR 2025.

Selected Output

Recent work on efficient multimodal inference, mobile AI benchmarking, and wireless sensing systems.

Repeated sampling with a verifier is a standard way to allocate test-time compute for code generation, but drawing K independent samples from one answer distribution often wastes the pass@K budget on near-duplicate re...

EMBER studies Budgeted Pre-Query Retention for long-horizon agents: an agent ingests a stream before future queries are known, keeps only a fixed budget of source evidence, and later answers from that retained memory ...

Large Multimodal Models (LMMs) are inherently modular, consisting of vision and audio encoders, projectors, and large language models. Yet, they are almost always executed monolithically, which underutilizes the heter...

Radar-based techniques for detecting vital signs have shown promise for continuous contactless vital sign sensing and healthcare applications. However, real-world indoor environments face significant challenges for ex...

Deploying large language models (LLMs) locally on mobile devices is advantageous in scenarios where transmitting data to remote cloud servers is either undesirable due to privacy concerns or impractical due to network...

Integrating millimeter wave (mmWave)technology in both communication and sensing is promising as it enables the reuse of existing spectrum and infrastructure without draining resources. Most existing systems piggyback...

Many types of human activities involve interaction with passive objects. Thus, by wirelessly sensing human interaction with them, one can infer activities at a fine resolution, enabling a new wave of ubiquitous comp...

Research Systems

Three current research lines: reinforcement-learning fine-tuning for small models, wireless human sensing, and on-device AI systems.

RL Finetuning for Small Models

Improving small language models with RL fine-tuning, supervised fine-tuning, and memory policies for long-horizon agents under tight compute and context budgets.

View project contextWireless Human Sensing

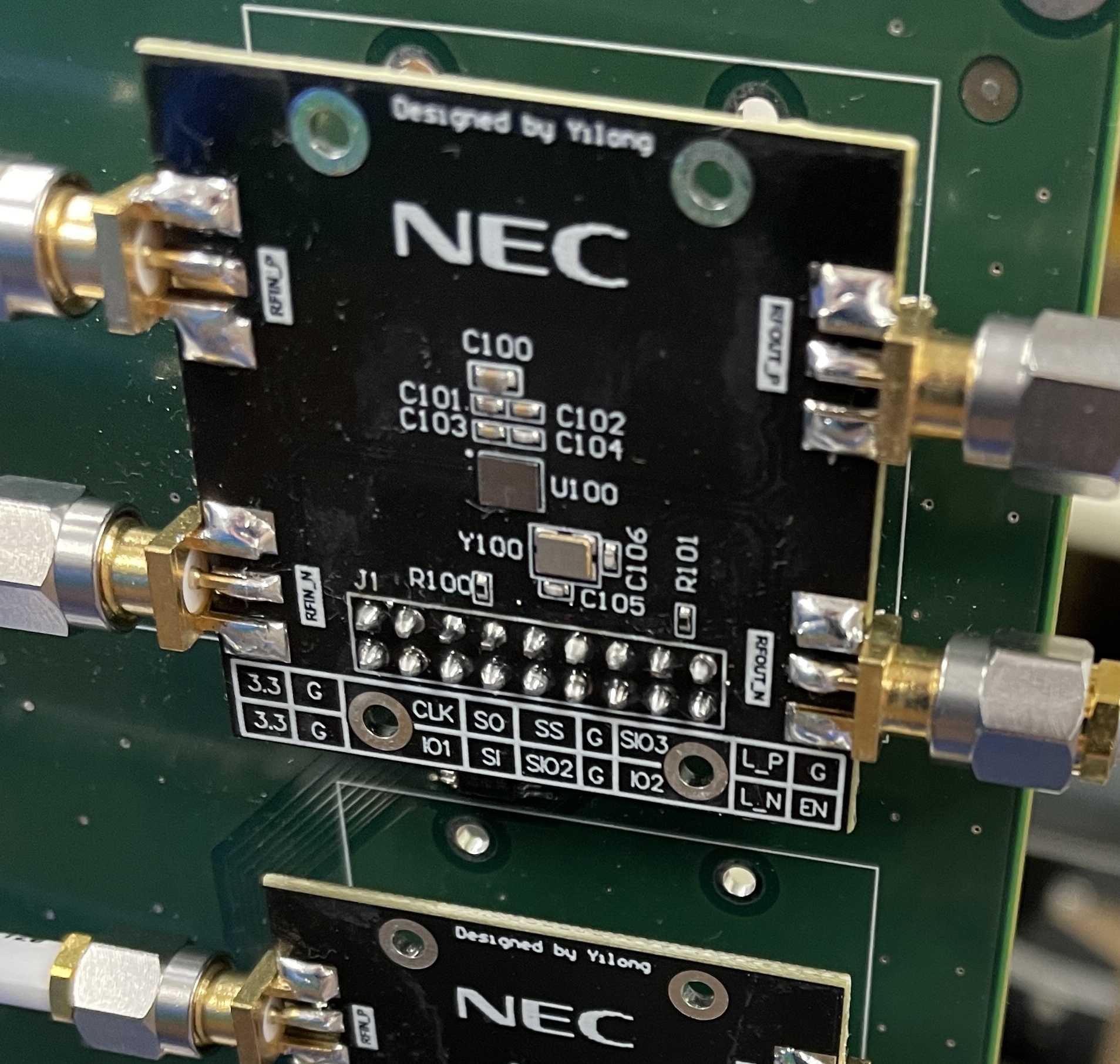

Ongoing work on in-the-wild pupillometry with glasses-mounted 60 GHz mmWave radar, front-facing illumination context, and light-compensated cognitive sensing.

View project contextOn-Device AI

Multimodal assistants that run on small devices, combining custom hardware, embedded runtime software, local visual understanding, and persistent memory.

View project context